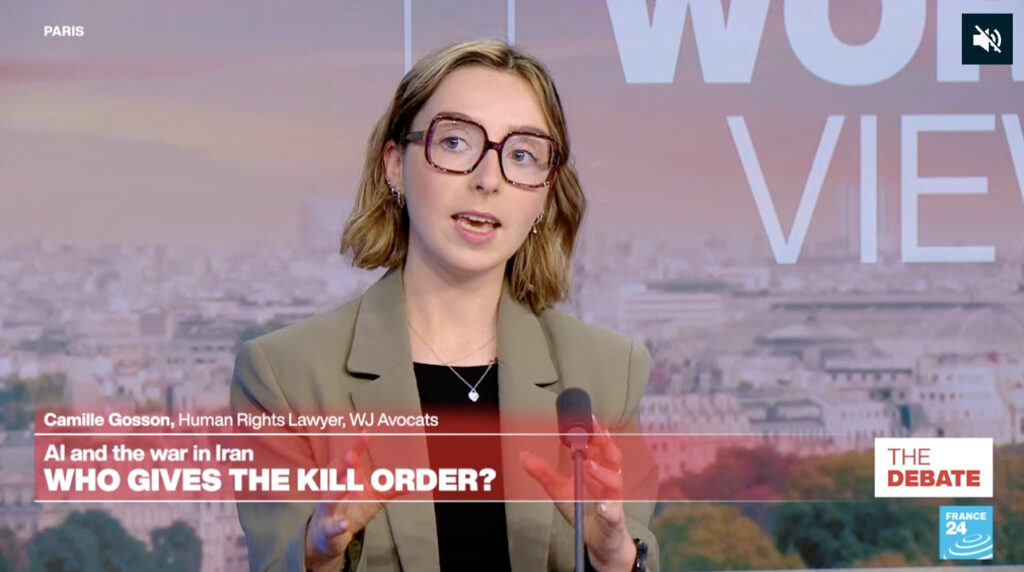

Camille Gosson spoke on The Debate on France 24 following the preliminary findings of a U.S. investigation suggesting that the February 2026 strike on a school in Iran, which killed 175 people, may have been based on outdated intelligence generated by an artificial intelligence system used by the U.S. military.

She recalled that under the Rome Statute, the qualification of a war crime requires the existence of intent. Negligence alone is generally not sufficient unless a violation of international humanitarian law can be established, in particular with regard to the principle of precaution. She also emphasized that responsibility does not necessarily rest solely with public decision-makers. It may also extend to private actors, including company executives involved in the development or supply of artificial intelligence systems used in military operations.

Camille Gosson further noted that, according to the current consensus in international criminal law, the existing legal framework is already sufficient to prosecute the misuse of artificial intelligence, as recently reiterated by the Office of the Prosecutor of the ICC in a statement issued in December 2025. She nevertheless observed that the emergence of truly autonomous systems — meaning systems capable of deciding to carry out a strike without final human intervention — may eventually raise the question of adapting the existing legal framework.